Tag: Microsoft

-

Israel and US companies are using Gaza as a tech test lab for AI, Germany may soon benefit in a new ‘Pact’

The Gaza war is also a testing ground for increasingly automated systems. A German-Israeli ‘Cyber and Security Pact’ could ensure the transfer of technologies. Gaza has long served Israel as a testing ground for disruptive military technologies. At the turn…

-

Big Brother Awards: Reprimand for digital coercion

The German association Digitalcourage awards the annual Negative Prize – Zoom, the Ministry of Finance and Microsoft are honoured. The “data sin” is an offence that often goes unpunished. The Digitalcourage association has been drawing attention to this in Germany…

-

Planned regulation: EU Commission postpones mandatory screening of encrypted chats

Providers of messengers and cloud services will be allowed to voluntarily screen for child abuse content worthy of prosecution, which is to become mandatory across the EU. The Council and Commission are pushing for an extension to other crime areas.…

-

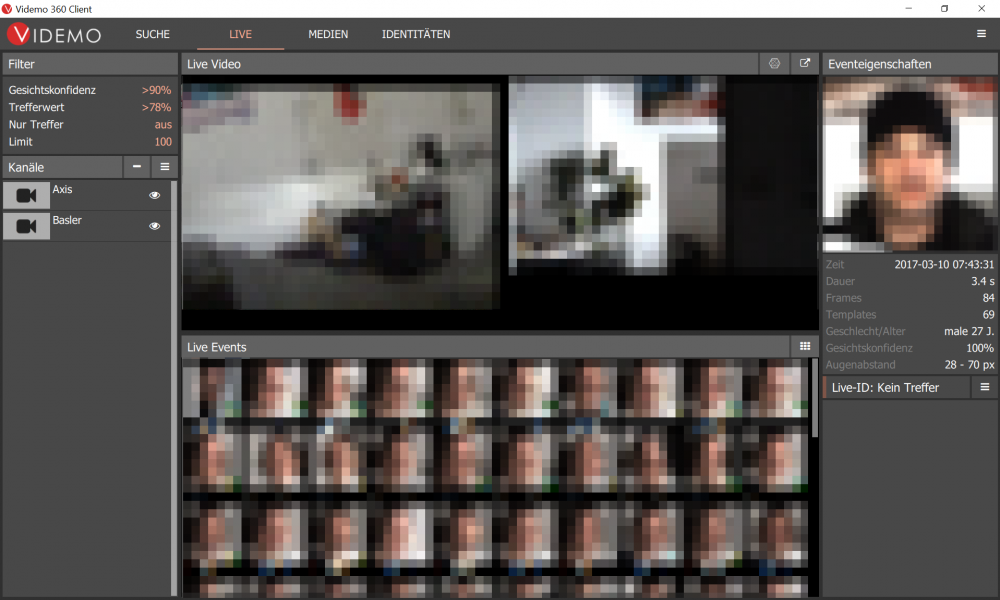

G20 in Hamburg: Data protection commissioner considers face recognition illegal

The Hamburg police have been researching facial analysis software for several years, which was then used for the first time after the G20 summit. The technology accesses the nationwide INPOL file for criminal offenders maintained by the Federal Criminal Police…